Edit: After I published this post, Mojeek contacted me and pointed out that they do have a public monetization plan, which I had somehow not come across when doing my research about them. The text of their plan fully satisfies the concerns I listed below, including addressing what I consider appropriate advertising. So, assuming they continue to execute in accordance with the principles they describe in their post, my concerns regarding their monetization strategies have been satisfied. They have also fixed the 403 - Forbidden problem with Cyrillic searches. I would hope that they would publish a timeline for when they anticipate being able to support other language sets, but I am a patient and understanding man regarding the difficulties of bringing a search engine online with useful results in every world language. I am leaving the previous text of this post intact for historical reasons and so that users can understand the monetization concerns that I have when considering a search engine.

Let me just start this post by saying that trying to find a good search engine is one of my least favorite activities. Mostly because I don’t feel like I have any good options, so I am left to pick whatever I determine to be the least bad among what I can find.

About a month ago a user pointed me to a post where Startpage declared their intention to drop support for browsers that did not have JavaScript enabled. This, of course, is disqualifying for a search engine to be included in Privacy Browser, and especially as the default search engine. The post in question originally said that a bunch of cool features that they wanted to implement required JavaScript, and so they were slowly rolling out by region a new version of their search engine that would not work with JavaScript disabled. In my testing this new version was not yet active in my region, but I began making plans for removing Startpage from Privacy Browser. Part of that was to post on Mastodon asking the community for suggestions for a replacement.

A few days later, on June 3, Startpage replaced the original text of their announcement with a defense of JavaScript, arguing that it really isn’t that bad, that there are lots of other things that can track you on the web besides JavaScript, and that we should just trust them because they are so trustworthy. The focus of this post isn’t for me to go into all the things that are wrong with JavaScript. Suffice it to say that I strenuously disagree with Startpage on this issue, JavaScript is one hundred times more dangerous than all other web tracking technologies combined, and when it comes to the internet, I am not a Trust but Verify kind of guy. I have no Trust in the general internet. I am just a Verify kind of guy. And once JavaScript is enabled, it becomes impossible for me to verify that I am not being tracked.

Note that a few years ago Startpage made a big deal about how searches would work without the need for JavaScript. When they updated their post on June 3, they removed the section saying that they would be slowly rolling out by region the new version that required JavaScript. However, the title is still “Why Are We Requiring Users to Enable Javascript?”, which makes their intentions pretty clear.

The replacement I have settled on for the time being in Mojeek.

Mojeek has their own web crawler with their own index. Almost everyone else either buys their search results from Google or Microsoft, or they scrape them from Google or Microsoft. In the long term, the only viable privacy focused search engine solution will have to maintain their own index.

There are two big downsides to Mojeek. The first is that they do not yet have a public monetization plan. They exist entirely off of angel investor money. There is nothing inherently wrong with that, but at some point they will either need to become profitable or go out of business. If they had a public monetization plan I could analyze it and decide if it was respectful of user privacy. But when companies exist off of investor money and don’t have a public monetization plan, it often means that they don’t want to tell you what their monetization plan is because you wouldn’t like it. Usually their goal is to get their hooks into as many customers as possible, and then slowly boil the frog and hope that they don’t lose too many people when they show their true colors (see the history of every single non-open-source social media company and almost every single technology company).

To be clear, I think it is appropriate for a search engine to make money, and the most obvious way for them to do that is to include appropriate ads in their search results. Where I differ with most people is in my definition of appropriate ads. An appropriate ad in a search engine has at least two features.

- It is not based on any tracking of the user. It isn’t based on anything the search engine knows about what the user likes or what the user has done in the past or what accounts the user has on other services, because the search engine shouldn’t know any of these things about the user. It is only based on the search terms that are used for the query, and, possibly, some rough geolocation information from the IP address of the request, so that a person in New York searching for a plumber doesn’t end up with an ad for plumbers in Mexico.

- The ad URL does not include any tracking that lets the search engine know the user clicked on it. Instead, it takes the user directly to the site that posted the ad. This flies in the face of almost all the standard internet ad culture, which wants to know everything about everyone who clicks on every link.

Along these lines I appreciate what Troy Hunt had to say about the subject. I would like to see someone do something similar with a search engine.

The second big downside to Mojeek was pointed out to me by a user just before Privacy Browser 3.8.1 was released, which is that it doesn’t support Cyrillic queries.

I submitted the following to Mojeek through their contact page:

I am the developer of Privacy Browser <https://www.stoutner.com/privacy-browser/> and am in the process of making Mojeek the default search engine. However, a user informed me that searches involving Cyrillic characters return a 403 – Forbidden error. Is that intentional?

They responded by email with the following:

Thanks for getting in touch and really great to hear about your browser. Obviously we are delighted that you are looking to make Mojeek the default search engine.

At the moment we do not offer Cyrillic searches as our index does not currently contain crawled results which would provide anything useful. It takes a long time to build up an index and for the moment we are focused on improving our results in English, German, French, and some other (mainly Romance) languages.

It may well be that inclusion of Cyrillic characters returns a 403, sometimes. But that is certainly not intentional. I tried a few searches myself, with Cyrillic characters, and did not get a 403. The reason for the 403 is almost certainly due to our bot capturing software. Special characters can also occasionally return a 403 also.

Unfortunately a large amount of requests we get are from bots, and sometimes our software is overzealous, and punishes humans who are searching. Use of such characters is prevalent in many bots, but we don’t always get it right.

If you have anymore questions please do not hesitate to ask. And please do keep us in touch about progress with your browser.

I responded by asking if they had a timeline for when they would support Cyrillic searches. Their answer is below.

No, I am sorry to say we don’t. Given the size of our team and based on our current understanding of opportunities, it’s not in the planned future.

Currently we are working on Business Listings, Maps, Contextual Ads, Search Quality and expanding our servers 50%.

Needless to say, that is a disappointing response. There is a little more discussion on Mastodon. One of the requirements for a search engine to be included in Privacy Browser is that it must produce usable results. In English, the results are not as robust as those provided by Google, but they are good enough for a majority of searches. However, returning a 403 - Forbidden for every search from an entire set of languages doesn’t fit well under the usable results category. It might be good enough to remain in the included list of search engines, but not enough to be the default search engine.

This is another way of saying that I am already looking for another default search engine. Suggestions are welcome, but I recommend that you first take a look at the Mastodon thread on this subject, as well as looking over the various feature requests on Redmine, as the majority of communication I receive on this topic has already been addressed in depth.

I considered two other search engines for this transition. The one I was most interested in was Monocles, which is a highly customized Searx instance. Like all Searx instances, it doesn’t have its own index, but instead scrapes search results from other engines. Other engines don’t like that, and they often rate-limit Searx instances when they start scraping too much data, leading to problems like the following:

In conversing with Arne, who runs Monocles, we both agreed that Monocles search wouldn’t end up providing a good experience for Privacy Browser’s users. He has a long-term plan to replace his Searx instance with a YaCy instance. YaCy is promising because it crawls the web and builds its own index, like Mojeek. However, building a sufficiently large index to be competitive and maintaining it over time is a billion dollar project, which is why only a few companies operate in that space.

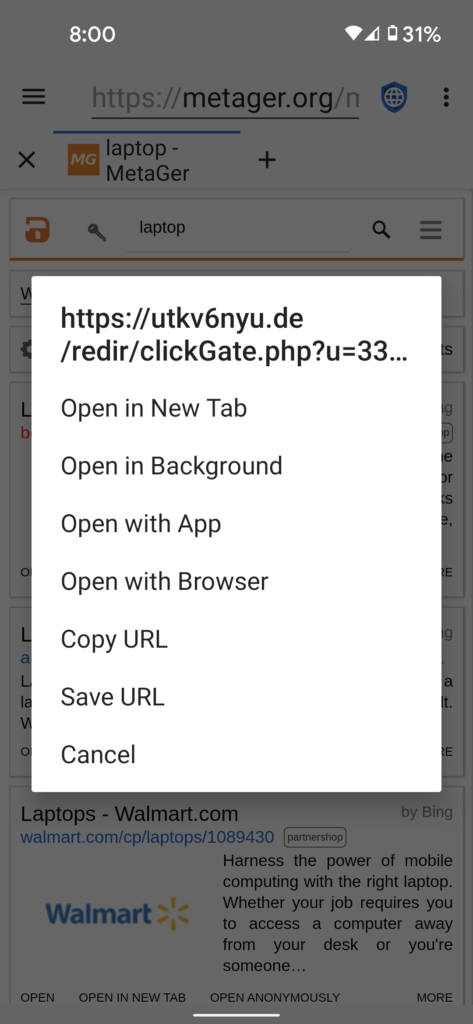

The other search engine I considered was MetaGer. The biggest problem is that they include tracking URLs on all their ads, which is a big no-no as I described above.

When I first released Privacy Browser I used DuckDuckGo as the default search engine. I liked a lot of things about DuckDuckGo in the early days, but they have gone downhill since then. When I dropped DuckDuckGo with the 2.12 release I wrote a blog post explaining the decision. Beyond the reasons listed there, about the time 2.12 was released I was contacted by a user who had loaded Privacy Browser into a virtual machine that tracks all connections an app makes and noticed that it contacted Amazon every time the app started. It turns out that this was because DuckDuckGo had moved their hosting to Amazon’s Web Services (AWS). That wasn’t how they had started out, as I had checked before I originally made them my default. Since that time, they have moved their hosting to Microsoft’s Azure platform.

$ nslookup duckduckgo.com

Name: duckduckgo.com

Address: 52.250.42.157

$ whois 52.250.42.157

NetRange: 52.224.0.0 - 52.255.255.255

CIDR: 52.224.0.0/11

NetName: MSFT

NetHandle: NET-52-224-0-0-1

Parent: NET52 (NET-52-0-0-0-0)

NetType: Direct Assignment

OriginAS:

Organization: Microsoft Corporation (MSFT)AWS and Azure are both concerning because they are not like a colocation environment, where the owner of the data center has no visibility into machines that are owned and installed by a company like DuckDuckGo. Rather, everything runs in a virtual environment that is fully controlled by Amazon or Microsoft. They can spy on all the fully unencrypted data of every one of their customers. So, even if you trust DuckDuckGo to not log or track any of your searches, do you trust Microsoft not to steal all that data from DuckDuckGo, log it themselves, and track everything you do? And, DuckDuckGo wouldn’t even be able to tell if this were happening.

Beyond that, I recently learned that for a long time the DuckDuckGo Android app tracked every website a user visited, even if they didn’t use a DuckDuckGo search to get there. They did this by requesting the website favorite icon through DuckDuckGo’s servers instead of directly from the website. Despite their protests to the contrary, there is absolutely no legitimate reason to do this. When it was reported to them they originally blew it off as not being a problem. It was only after a massive negative response that they relented. This wouldn’t have been a problem for using DuckDuckGo on Privacy Browser, as this only affected their own app. But it shows where their true priorities lie.

In Privacy Browser 2.12 I switched to using Searx as the default search engine. Originally, I used the searx.me instance, which was run by the developers of Searx. However, it was often rate limited, as described above. Eventually, they gave up hosting a public instance, and instead searx.me just has a list of other public instances, most of which instantly have problems whenever a bunch of people start using them.

Because of this, in Privacy Browser 3.2 I switched to Startpage, which brings us up to the present.

As is always the case, these changes only affect the defaults for new installations. People who currently have Startpage as their homepage and search engine will not notice any changes when they upgrade to 3.8.1. That is, until Startpage starts enforcing the requirement that JavaScript be enabled to perform a search. Additionally, any user can add Startpage as a custom search engine to their device if they so desire. The search URL is as follows:

https://www.startpage.com/do/search?query=